|

Puppeteer only supports NodeJS, it is maintained by the Google team and supports Chrome (Firefox support will come later, it's experimental at the moment). Selenium is the oldest one, it has librairies in almost all programming languages and support every major browsers. The three most used APIs to run headless browsers are Selenium, Puppeteer and Playright. By using a Headless Browser, you're more likely to bypass those automated test and get the target HTML page.

The other benefit of using a Headless Browser is that many websites are using "Javascript challenge" to detect if an HTTP client is a bot or a real user. That's one of the reason why Headless Browsers are so important. If you were using a regular HTTP client that doesn't render the Javascript code, the page you'd get would be almost empty.

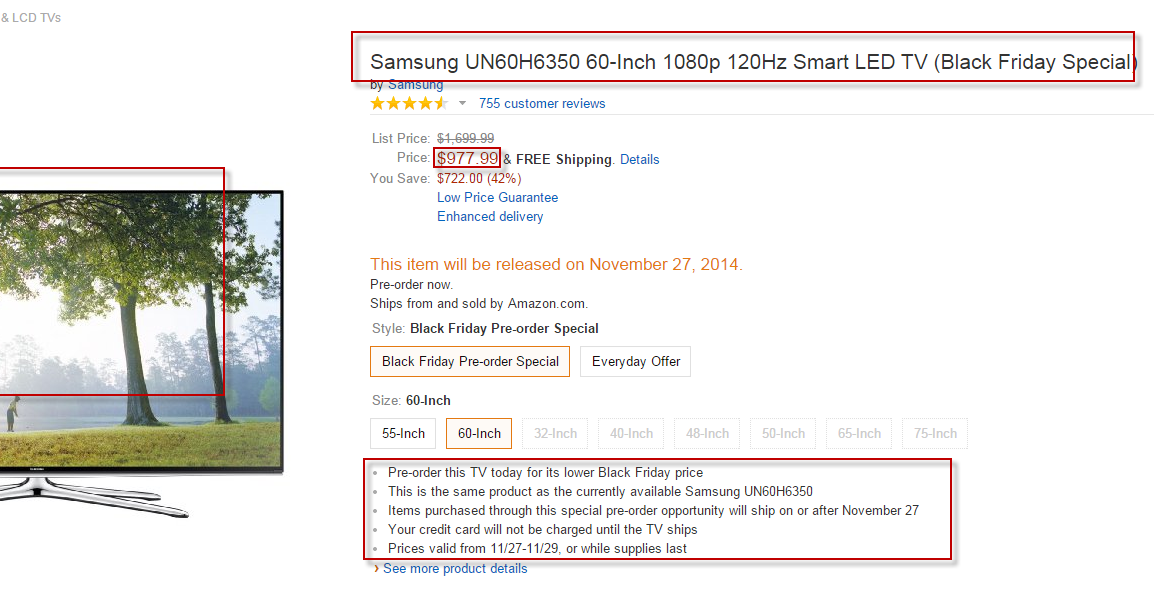

Those Javascript frameworks use back-end API to fetch the data and client-side rendering to draw the DOM (Document Object Model). There are more and more websites that are built using shiny front-end frameworks like Vue.js, Angular.js, React.js. Headless Browsers are another important layer in modern web scraping. Then there is a hybrid type of proxies that combines the best of the two world: ISP proxies Some websites block data-center IPs entirely, in that case you will need to use a residential IP address to access the data. There are two types of proxies, data-center IPs and residential proxies. Depending on where your servers are located and the target website you want to extract data from, you might need proxies in another country.Īlso, having a large proxy pool is a must-have in order to avoid getting blocked by third-party website. An American website will display a price in Dollars for US people based in the US. For example, an online retailer will display prices in Euros for people within the European Union. Many websites display different data based on the IP address country. Proxies are a central piece of any web scraping operation. Extraction rules (XPath and CSS selectors).You web scraping stack will probably include the following: In-house web scraping pipelineĪs an example let's say you are a price-monitoring service extracting data from many different E-commerce websites. There are many different technologies and frameworks available, and that's what we are going to look at in this part. If you are a tech company or have in-house developers this is generally the way to go.įor large web-scraping operations, writing your own web scraping code is usually the most cost-effective and flexible option you have. In this part we're going to look at the different ways to extract data programmatically (using code). How to extract data from the web with code In our experience with ScrapingBee, these are the main use cases that we saw the most with our customers.Of course, there are many others. Other industries like online retailers are also monitoring E-commerce search engine like Google Shopping or even marketplace like Amazon monitor and improve their rankings. Search engine results: Monitoring search engine result page is essential to the SEO industry to monitoring the rankings.Lead generation: When you have a list of websites that are your target customer, it can be interesting to collect their contact information (email, phone number.) for your outreach campaigns.They extract reviews from lots of different websites about restaurants, hotels, doctors and businesses. Review aggregation: Lots of startups are in the review aggregation business and brand management.Influencer marketing agencies are getting insights from influencers by looking at their followers growth and other metrics. Social media: Many companies are extracting data from social media to search for signals.News aggregation: News websites are heavily scrapped for sentiment analysis, as alternative data for finance/hedge funds.It's also a gold mine for market research. Real estate: Lots of real-estate startups need data from real-estate listings.

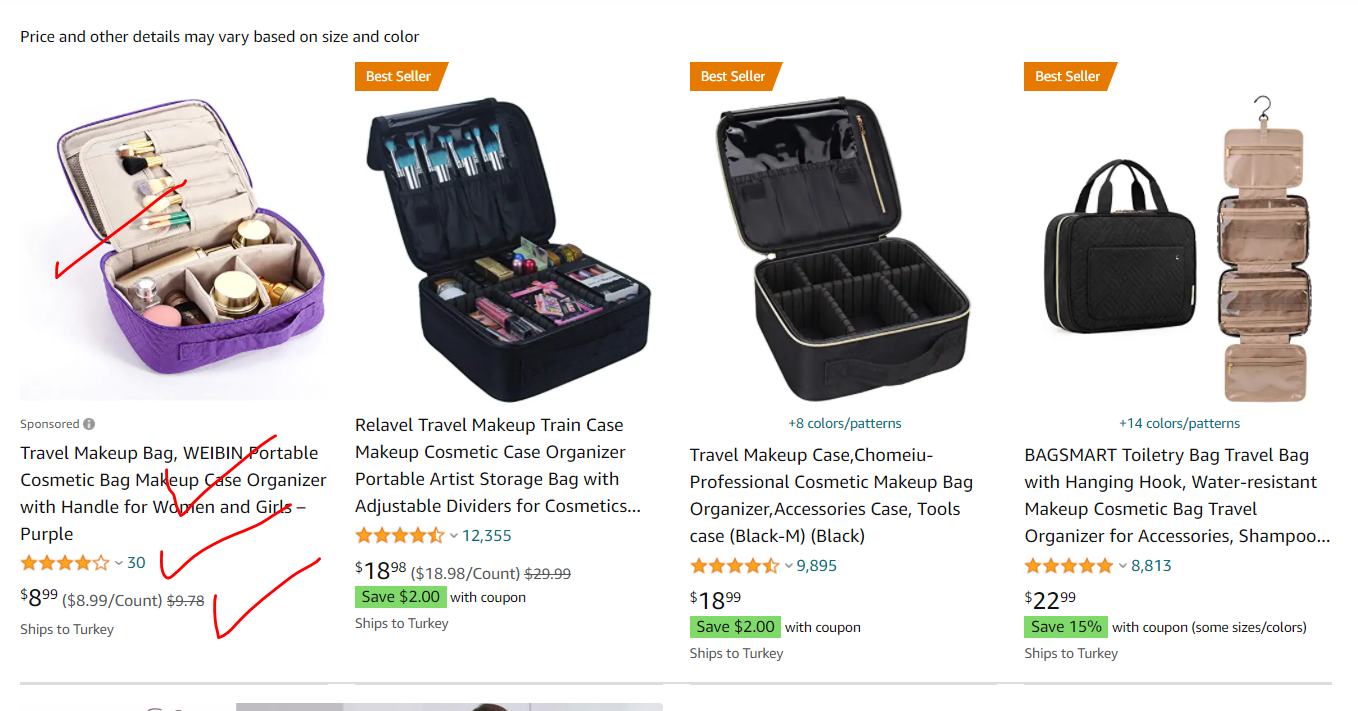

They monitor their competitors's stock, price changes, sales, new product. Online price monitoring: Many retailers are monitoring the market online in order to dynamically change their pricing.Here are some interesting web scraping use cases: What are the different use cases for web scraping? From building your web scraping pipeline in-house, to web scraping frameworks and no-code web scraping tools, it's not an easy task to know what to start with.īefore diving into how to extract data from the web, let's look at the different web scraping use cases.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed